Trump may review AI models before public release

President Trump could review advanced AI models before companies release them publicly under a plan the White House is weighing to assess security and consumer risks.

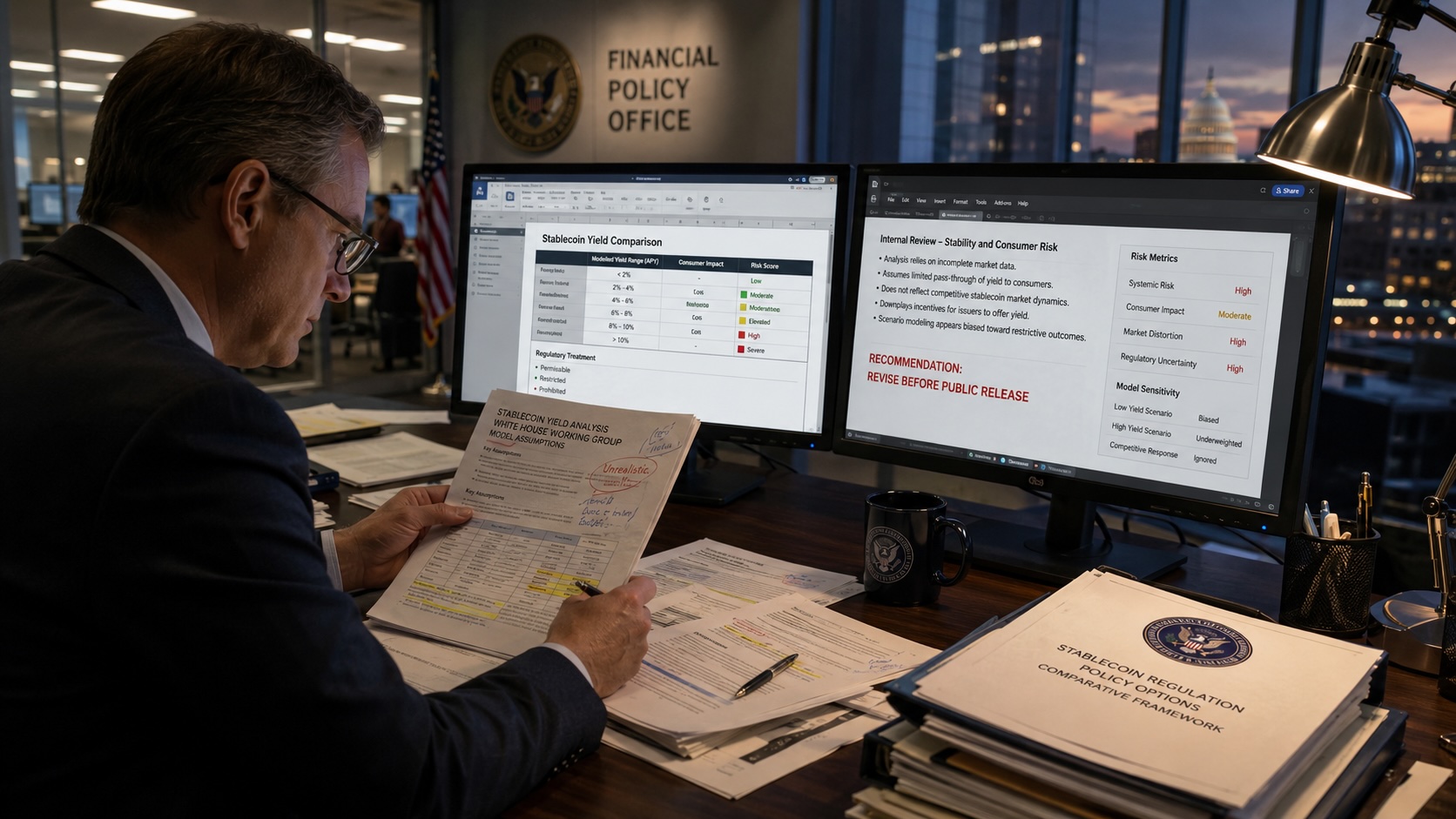

The Trump administration is considering a policy that would allow federal authorities to review advanced artificial intelligence models before companies make them publicly available. White House advisers are discussing the plan as a way to assess national security and consumer safety risks and to set conditions for deployment.

Officials are weighing several mechanisms that would require developers to submit certain models for review when they meet predefined capability or usage criteria. Options under discussion include mandatory review for models that exceed size or performance thresholds, review limited to systems intended for high-impact applications, and a voluntary certification process backed by regulatory authority. Implementation could involve agencies with technical expertise such as the Commerce Department and Department of Homeland Security, along with standards bodies that would set testing protocols.

The review process is intended to identify risks including large-scale disinformation campaigns, automated cyber intrusions, misuse in weapon systems and threats to critical infrastructure. Proponents argue that examining models before widespread distribution would allow regulators to require mitigations such as red-teaming results, safety benchmarks or access controls.

Technical approaches under consideration include a capability-based trigger that would prompt review when models demonstrate emergent behaviors or exceed performance thresholds on evaluated tasks, and a data- and access-based approach that would focus on models trained on sensitive data or designed for broad public access. The administration is also exploring the use of third-party auditors to validate safety claims while protecting proprietary model details.

Industry groups and technology companies are expected to raise concerns about accelerated review timelines, protection of trade secrets and potential effects on innovation. Startups and research labs have warned that mandatory pre-release reviews could slow product development and raise compliance costs that larger firms can absorb more easily. Legal experts have identified questions about how a pre-release review regime would interact with existing administrative law, intellectual property protections and First Amendment doctrine.

The discussion is occurring alongside international efforts. The European Union has advanced a risk-based AI regulatory framework, and other governments are pursuing export controls and technical standards. Some U.S. policymakers say aligning review requirements with allies could reduce regulatory friction for firms operating globally.

Members of Congress could pursue legislation to define the scope and limits of any executive authority over AI, including legal thresholds, privacy safeguards, oversight mechanisms and protections for proprietary information. Large language models and multimodal systems have advanced rapidly and public access to powerful generative tools has grown, factors that officials cite as driving the current policy review.