ChatGPT Images 2.0, cheap deepfakes drive AI fraud surge

ChatGPT Images 2.0 and low-cost deepfake tools are producing fake IDs and live face swaps used in scams that cost a Chicago man $69,000 and fuel crypto frauds averaging $3.2 million.

In early May 2026, researchers and security teams documented several high-profile synthetic videos and demonstrations that used widely available AI tools. The FBI director posted a video that appeared to include AI-generated footage nearly identical to a well-known music video, and another AI clip of a mayoral candidate drew millions of views on social media.

Testing by journalists and security researchers found the latest image-generation models can produce fake government IDs, prescriptions, bank alerts and screenshoted news articles. Researchers demonstrated a real-time face-swap product that can run on common video-conference platforms with little setup and costs a few hundred dollars.

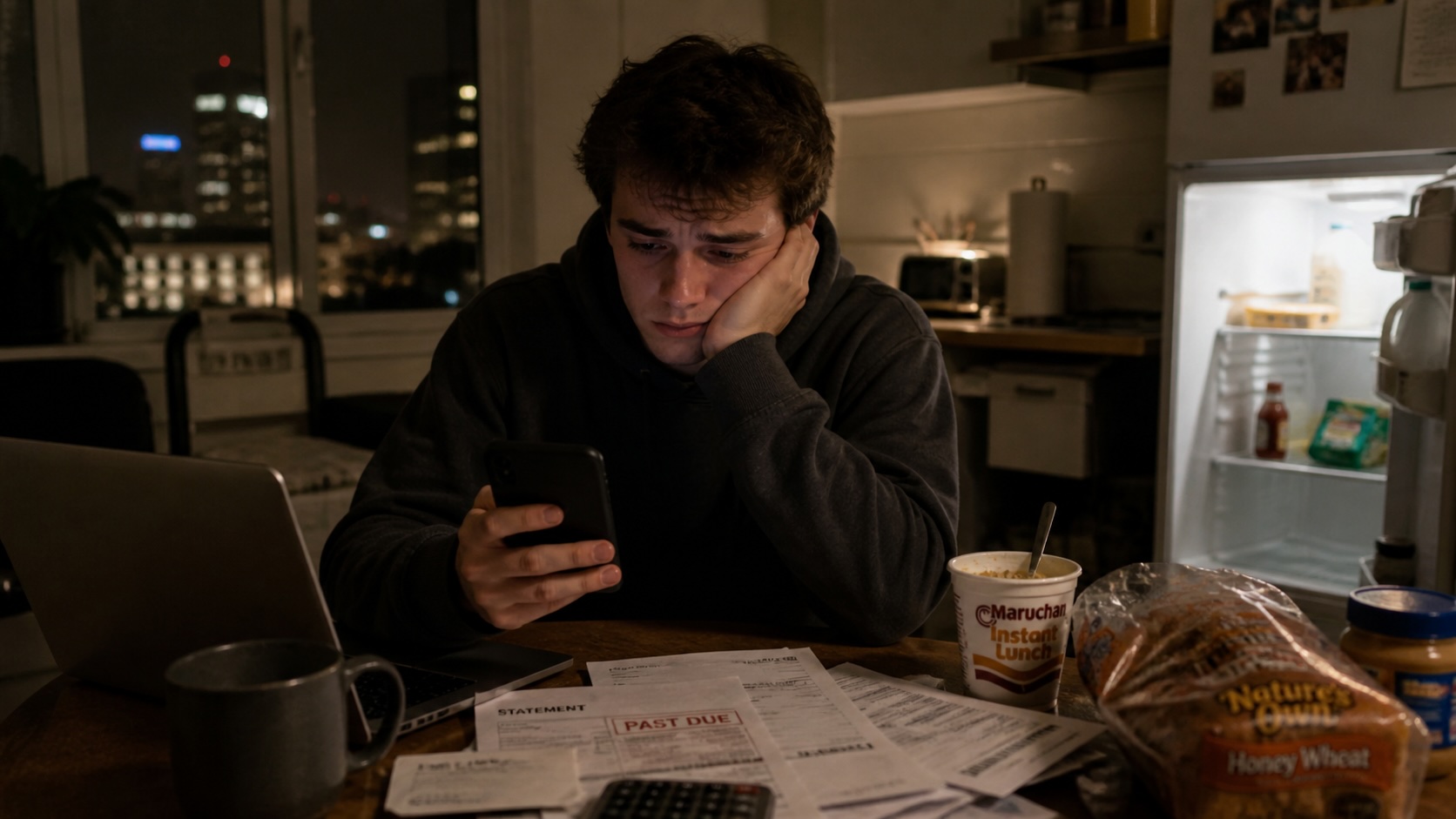

Reported frauds have used those techniques. One Chicago resident transferred $69,000 after a scammer displayed an AI-generated U.S. Marshals badge during a video call. Chainalysis reported that fraudsters combine deepfakes, face-swap apps and large language models with romance and investment cons; their analysis found AI-assisted crypto scams average about $3.2 million per case.

Security investigators linked earlier incidents to organized actors. In August 2025, attackers impersonated a crypto founder and stole $2 million. Some inquiries have connected state-level actors to deepfake video calls used in cryptocurrency fraud.

Vendors that track voice and video synthesis noted the tools producing viral content are consumer-grade, widely available and improving faster than many institutional defenses. One vendor wrote that several recent incidents originated from tools that cost a few hundred dollars or are sold by subscription.

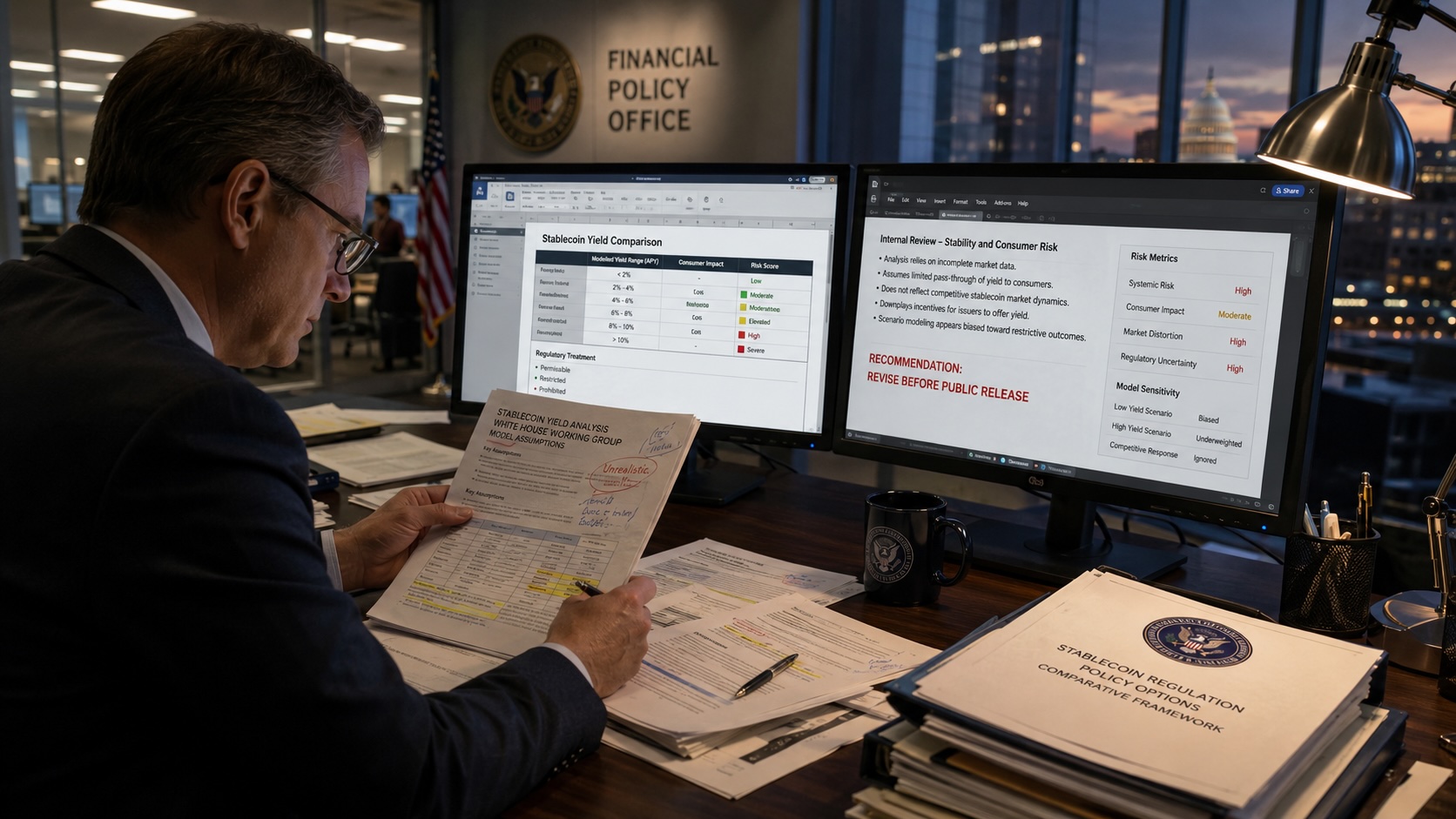

Financial institutions, health providers and government agencies face added challenges because forged documents and believable live interactions can bypass some standard verification checks. A security researcher who reviewed the newest image models reported the ease of creating fake receipts, bank alerts and IDs reduces the reliability of routine fraud screening.

Law enforcement and private security teams are testing detection tools and changing verification procedures. Some organizations are adding multi-factor identity checks, requiring in-person verification for high-value transactions, and warning customers to be skeptical of unsolicited requests that reference official badges or press screenshots. Platform operators are under pressure to remove synthetic content that impersonates public figures or is used to defraud users.

The incidents in early May and the reported frauds show consumer-level synthetic-media tools are being applied to impersonation and document-based scams. Researchers and investigators continue to monitor tool development and reported outcomes.