BitOK CPO: ‘Verify, don’t trust’ AI outputs

BitOK CPO Dmitry Nikolsky urged firms to keep human oversight and ‘verify, don’t trust’ after chatbots produced false or harmful legal and medical advice.

Dmitry Nikolsky, chief product officer at compliance analytics firm BitOK, warned organizations not to replace human judgment with artificial intelligence and urged a verification-first approach after recent incidents in which chatbots gave incorrect or harmful legal and medical guidance.

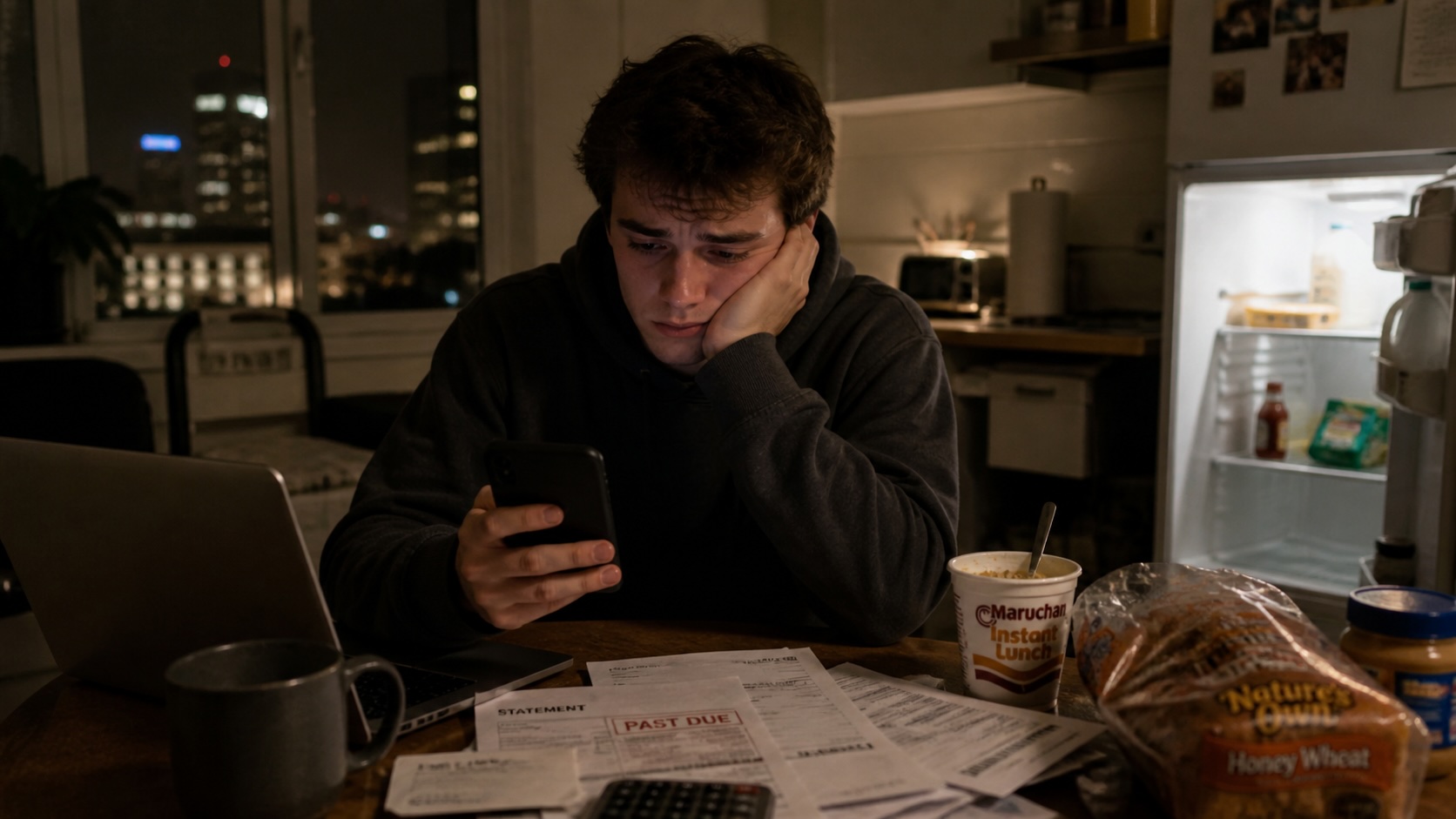

Nikolsky pointed to cases where an automated support tool advised people with eating disorders to count calories, a customer-facing bot invented an airline refund policy, and automated editorial tools mixed up images in sensitive reporting. He presented those examples as reasons firms should not treat large language models as authoritative decision-makers.

He explained that modern language models operate as statistical prediction engines that generate likely word sequences rather than reason about facts. According to Nikolsky, the models have no internal sense of truth and can “hallucinate,” producing fabricated references, policies or medical recommendations that appear plausible but are false.

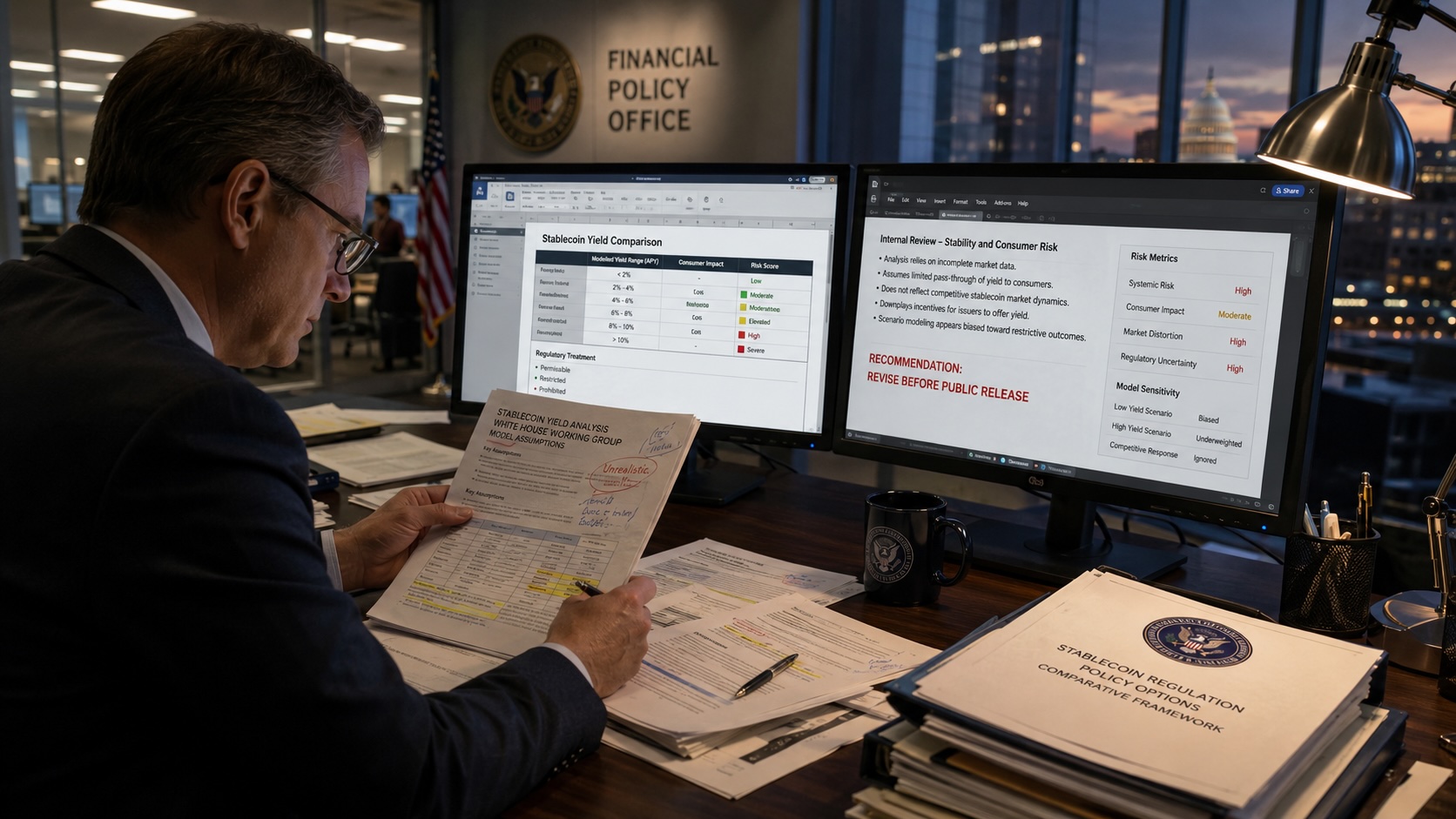

In the field of financial crime compliance, he said, algorithms can block small legitimate transfers while allowing complex laundering chains to continue because the systems follow formal rules without human judgment.

Nikolsky recommended that companies keep verification processes in place before accepting AI output. He advised using AI for routine tasks while preserving human judgment for legal, medical and other high-risk customer interactions. He added that verification should include access to training data or model explanations where feasible, cross-checking outputs against primary sources, and mandatory human review for consequential decisions.

He described an operational risk he calls “false confidence,” where polished dashboards and summaries lead analysts to trust algorithmic results more than their own expertise. He gave examples of programmers relying on code-completion tools and losing architectural judgment and analysts stopping regular consultation of primary documents after years of accepting summarized AI outputs.

Nikolsky referenced a historical pattern of caution in stories such as the Golem and Frankenstein and pointed to safeguards he considers practical: emergency shutdown mechanisms, clear assignment of responsibility, and rigorous testing of systems before deployment.

He also cited industry studies showing that 55% of companies that quickly replaced staff with AI later reported negative outcomes, including customer losses and damaged reputations. Nikolsky urged executives to design workflows that preserve human oversight and to prioritize verification when integrating AI into business operations.